Fake news continues to haunt social media sites. Facebook, for one, still struggles despite its well publicised steps to deal with it.

Politifact

PolitifactThere has always been fake/distorted/ biased news — perhaps not on the scale of fake US election news — and it will continue as long as people want to believe it because they agree with it or it feeds their biases. Nevertheless, the efforts of social media, particularly Facebook, to get it under control have been somewhat successful, with some painful missteps.

Facebook’s recent high-profile fake news blunder involved the site’s “Safety Check” page which promoted a story that incorrectly identified the suspect of the recent Las Vegas mass shooting. The story got through Facebook’s fact-checking system, put in place in March this year.

An earlier story highlights the reverse psychology of some users: the fact-checking system labelled an article (on Irish slaves) as possible “fake news”, warning users against sharing it — the reverse happened when conservative groups decided that Facebook was trying to silence the story so spread the word – and traffic to the story skyrocketed.

When two or more fact-checkers debunk an article, it is supposed to get a “disputed” tag that warns users before they share the piece and is attached to the article in news feeds.

The spreading of this piece after it was debunked and branded “disputed” is one of many examples of the pitfalls of Facebook’s partnering with third-party fact-checkers and publicly flagging fake news. Articles formally debunked by Facebook’s fact-checking partners – including the Associated Press, Snopes, ABC News and PolitiFact – frequently remain on the site without the “disputed” tag warning users about the content. And when fake news stories do get branded as potentially false, the label often comes after the story has already gone viral and the damage has been done. Even in those cases, it’s unclear to what extent the flag actually limits the spread of propaganda.

Facebook’s efforts to curb fake news followed a widespread backlash about the site’s role in proliferating misinformation during the 2016 presidential election. The rocky rollout of Facebook fact-checking is as much a product of the enormity of the problem of Internet propaganda as it is a reflection of what critics say is a failure by the company to take this challenge seriously.

AP Fact check

AP Fact checkLast year, Facebook faced growing criticisms that it allowed fake election news to outperform real news, and creating filter bubbles that facilitated the increasing polarisation of voters. In response, Facebook announced that it would work to stop misinformation in part by letting users report fake news articles, which independent fact-checking groups could then review.

When two or more fact-checkers debunk an article, it is supposed to get a “disputed” tag that warns users before they share the piece and is attached to the article in news feeds. While some of the fact-checking groups said the collaboration has been a productive step in the right direction, a review of content suggests that the labour going into the checks may have little consequence.

ABC News, for example, has a total of 12 stories on its site that its reporters have debunked, but with more than half of those stories, versions can still be shared on Facebook without the disputed tag, even though they were proven false.

There are a number of sites that promote fake news as a “joke” to see how gullible readers are and how far the stories spread. The fact-checking system catches these as well. One well-known fake news writer Paul Horner, said some of his websites have been blocked on Facebook, but that other articles have gone unchecked, including one famously saying Trump issued an order allowing bald eagles to be hunted and another about the president cancelling Saturday Night Live.

The next steps

In its latest effort to combat misinformation and fake news, Facebook is testing a feature that gives users additional information around articles shared in the News Feed. The feature, indicated by a letter “i” icon near the headline of an article, will provide users with contextual information like the publisher’s Facebook and Wikipedia pages, related articles on the topic, and information on how other users are sharing the article.

Wikipedia will likely become an increasingly important element of a publisher’s image. As Facebook more widely rolls out the new tool and more users begin to lean on Wikipedia to help them judge an article’s credibility, publishers will likely put more emphasis on ensuring their Wikipedia page accurately and favourably reflects them.

It was designed to assist users in identifying phoney publisher accounts. If a publisher doesn’t have a Facebook or Wikipedia page available, users will know to take the information with a grain of salt, and remain cautious of the publisher’s validity. Although this does not necessarily curb the amount of fake news being spread on the platform, users may be more likely to identify false information on Facebook in the first place.

Snopes

SnopesThe announcement of this feature is timely, as user scepticism of the platform’s news, such as the Las Vegas massacre, may currently be particularly piqued.

Meanwhile, Wikipedia will likely become an increasingly important element of a publisher’s image. As Facebook more widely rolls out the new tool and more users begin to lean on Wikipedia to help them judge an article’s credibility, publishers will likely put more emphasis on ensuring their Wikipedia page accurately and favourably reflects them.

This may contribute to users becoming cautious of Wikipedia pages of publishers, as certain publishers may have an incentive to curate their Wikipedia profile. However, the website has over 130,000 accounts actively making edits, with over 10 edits being made per second.

Trust is timely. In an era in which fake news is trending, and brands are pulling advertising from large publishers because they don’t want their messaging associated with offensive content, trust is a critical factor that brands consider when re-evaluating digital ad strategies.

At the FCC’s Journalism Conference in April, keynote speaker and new

At the FCC’s Journalism Conference in April, keynote speaker and new  Geoffrey Colon, Senior Marketing Communications Designer at Microsoft, and author of Disruptive Marketing, echoed these sentiments when the FCC asked him for his 2018 social media trend predictions.

Geoffrey Colon, Senior Marketing Communications Designer at Microsoft, and author of Disruptive Marketing, echoed these sentiments when the FCC asked him for his 2018 social media trend predictions. Sir David Tang’s last visit to the FCC was in February 2016. Photo: FCC

Sir David Tang’s last visit to the FCC was in February 2016. Photo: FCC Canada’s Globe and Mail Asia correspondent Nathan VanderKlippe used Twitter to spread word of his detention.

Canada’s Globe and Mail Asia correspondent Nathan VanderKlippe used Twitter to spread word of his detention. Voice of America reporter Ye Bing’s Twitter profile.

Voice of America reporter Ye Bing’s Twitter profile. Lu Yuyu: Jailed for documenting protests in China.

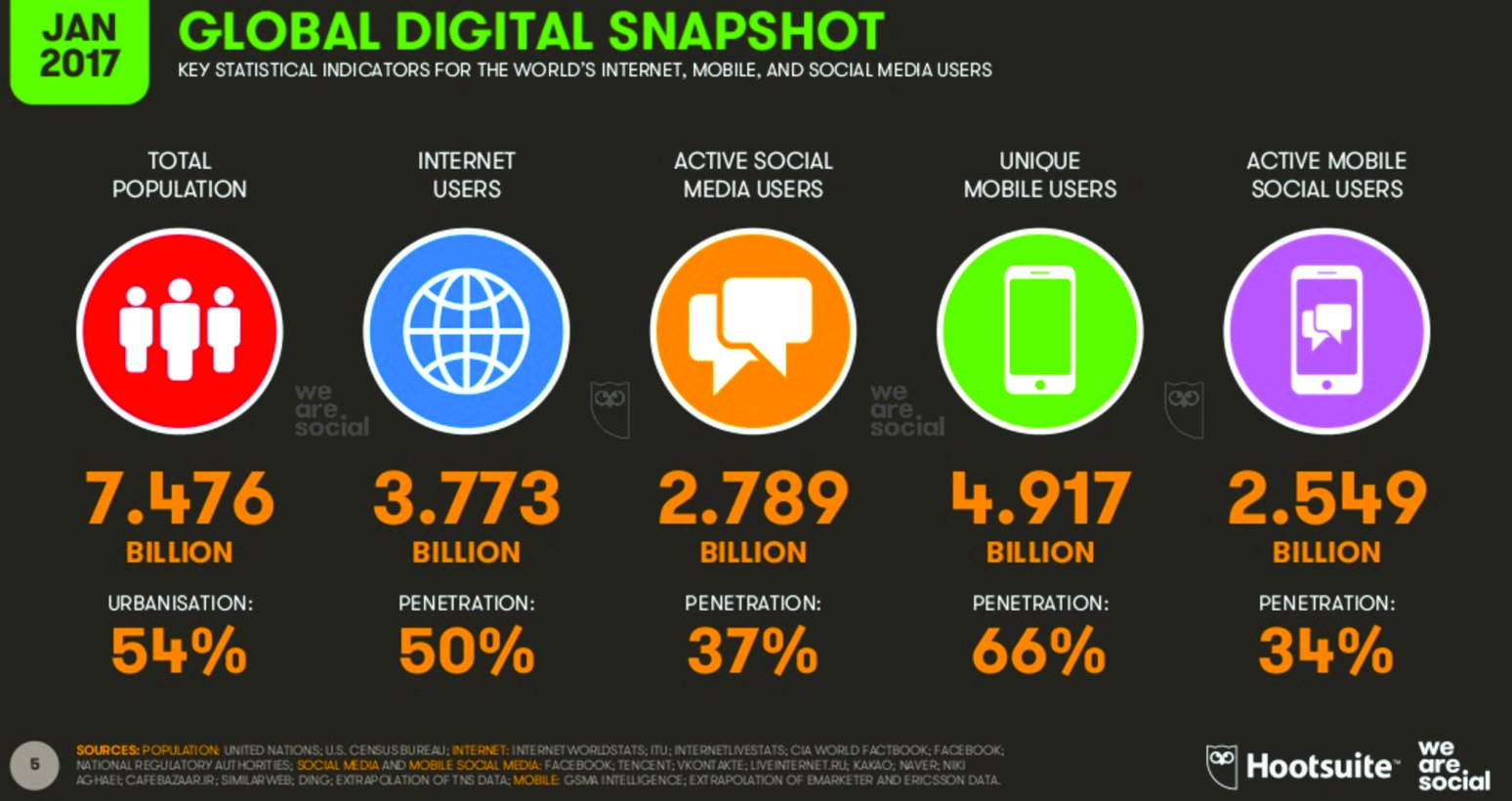

Lu Yuyu: Jailed for documenting protests in China. SCMP photographer Felix Wong was prevented from entering Macau. Photo: SCMP

SCMP photographer Felix Wong was prevented from entering Macau. Photo: SCMP Jon and Corky Adis

Jon and Corky Adis Regina Chan

Regina Chan Tamora Chan

Tamora Chan Dr. Mehdi Fakheri

Dr. Mehdi Fakheri Bennett Marcus

Bennett Marcus Davinia Tang

Davinia Tang Dr Yuen Cheuk Wai

Dr Yuen Cheuk Wai Marguerite T Yates

Marguerite T Yates Karson Yiu

Karson Yiu